These newly-validated monitors give gamers even more choice when searching for a great gaming display. I have verified that the nvidia-346 is the problem by specifically installing it as opposed to nvidia-current. More specifically, SWAG models are released under the CC-BY-NC 4.0 license. 6 hours ago &0183 &32 Our newest Game Ready Driver adds support for 8 new G-SYNC Compatible displays that deliver a baseline Variable Refresh Rate (VRR) experience that makes your gaming smoother and more enjoyable. Naturally, I thought I could then install cuda with: sudo apt-get install cuda But this tries to install nvidia-346 on my system causing my system to no longer display my desktop and the installation is incorrect. The only dependency I am aware of is that an NVIDIA driver version that supports the CUDA versions is installed. It is your responsibility to determine whether you have permission to use the models for your use case. The pre-trained models provided in this library may have their own licenses or terms and conditions derived from the dataset used for training.

Thanks for your contribution to the ML community! Pre-trained Model License If you’re a dataset owner and wish to update any part of it (description, citation, etc.), or do not want your dataset to be included in this library, please get in touch through a GitHub issue. CUDA applications built using CUDA Toolkit 4.2 are compatible with Kepler as long as they are built to include kernels in either Kepler-native cubin format (see Section 1. It is your responsibility to determine whether you have permission to use the dataset under the dataset’s license. After some readings, it seems that sm86 is only available for CUDA version 11.0 and above. The current PyTorch install supports CUDA capabilities sm37 sm50 sm60 sm70. Tutorial CUDA Nvidia GPU: CUDA Compute Capability Jetson Products Tesla Workstation Products Tesla NVIDIA Data Center Products Quadro Desktop Products. We do not host or distribute these datasets, vouch for their quality or fairness, or claim that you have license to use the dataset. Using torch 1.10.1+cu102 (NVIDIA GeForce RTX 3080) UserWarning: NVIDIA GeForce RTX 3080 with CUDA capability sm86 is not compatible with the current PyTorch installation. This is a utility library that downloads and prepares public datasets. See the CONTRIBUTING file for how to help out. You can find the API documentation on the pytorch website: Contributing The following is the corresponding torchvision versions and Please refer to įor the detail of PyTorch ( torch) installation. A version of NVIDIA CUDA Toolkit compatible with the installed driver version see Table 1 of CUDA Compatibility Binary Compatibility for an overview of.

We recommend Anaconda as Python package management system. _global_ void kernelDefault(_grid_constant_ const param_t p.The torchvision package consists of popular datasets, model architectures, and common image transformations for computer vision.

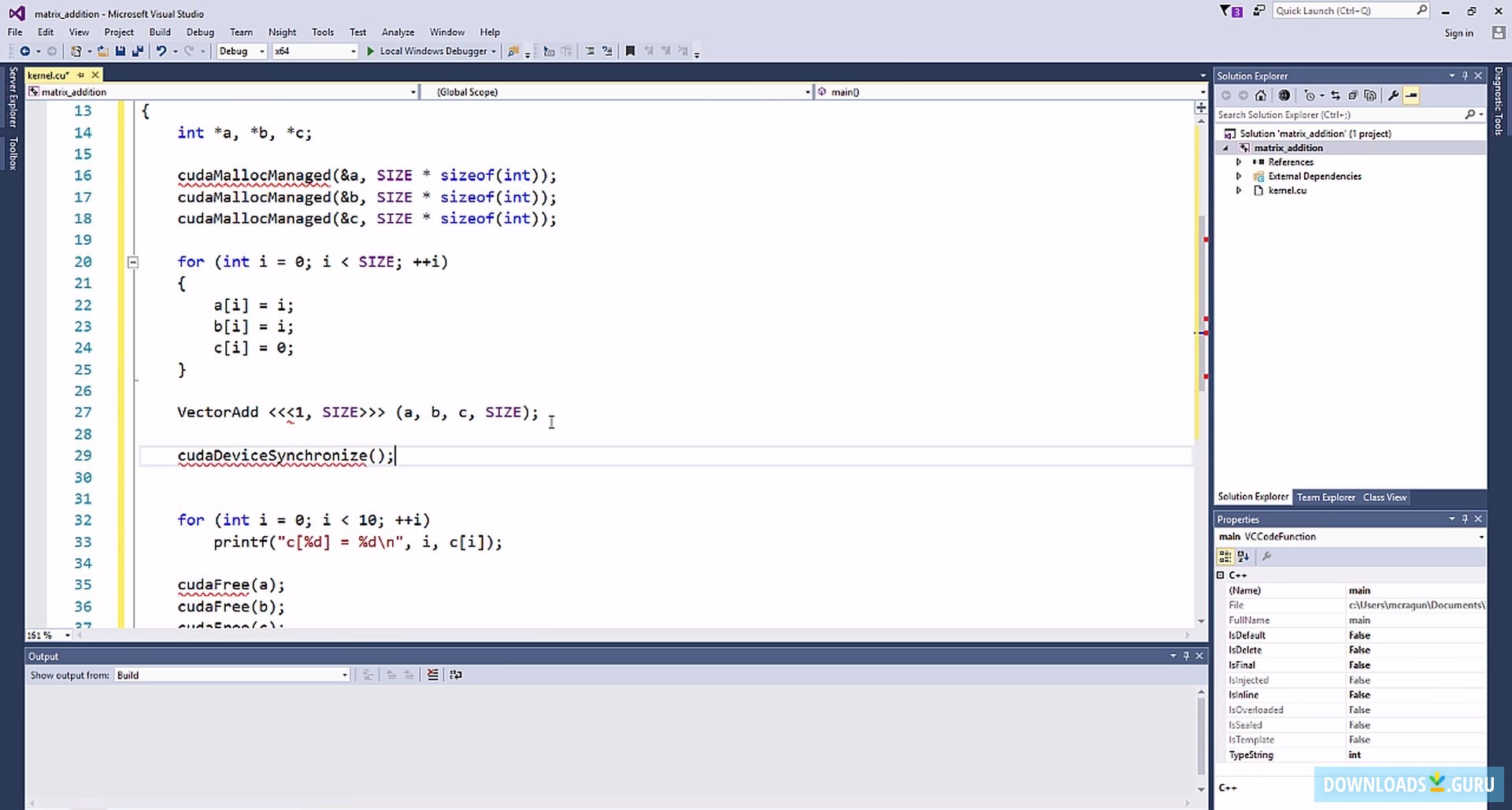

#define CONST_COPIED_PARAMS (TOTAL_PARAMS - KERNEL_PARAM_LIMIT) #define KERNEL_PARAM_LIMIT (1024) // ints If those binaries are compiled against cuda 10.2 binaries, you cannot use that version of pytorch with cuda 11.0, regardless of whether it is in the conda env or not. Package nvidia-cuda-toolkit-gcc focal (20.04LTS) (devel): NVIDIA CUDA development toolkit (GCC compatibility) multiverse jammy (22.04LTS) (devel): NVIDIA. Previously, passing kernel arguments exceeding 4,096 bytes required working around the kernel parameter limit by copying excess arguments into constant memory with cudaMemcpyToSymbol or cudaMemcpyToSymbolAsync, as shown in the snippet below. The conda install of pytorch is a binary install. CUDA 12.1 increases this parameter limit from 4,096 bytes to 32,764 bytes on all device architectures including NVIDIA Volta and above. CUDA kernel function parameters are passed to the device through constant memory and have been limited to 4,096 bytes.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed